In the first three parts of this series, we built four federated subgraphs and connected them with GraphQL, gRPC, and REST. But from the client's perspective, none of that complexity should be visible. The browser sends a single request to a single endpoint and receives a unified response.

Making that work requires two gateways, each responsible for a distinct layer of concerns. Kong handles the API layer — authentication, rate limiting, CORS, request tracking. Apollo Router handles the GraphQL layer — schema composition, query planning, parallel execution, and response merging.

This article traces a request from the browser through both gateways and into the subgraphs, showing how authentication context flows, how query plans are constructed, and why the two-gateway pattern avoids the pitfalls of putting everything in one layer.

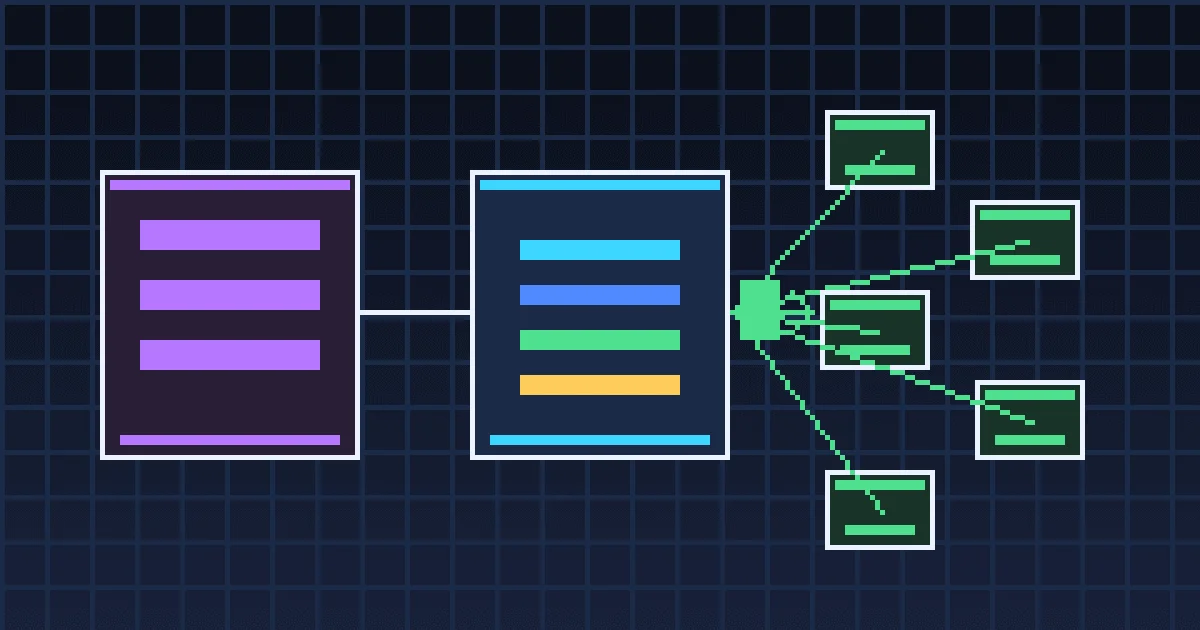

The Two-Gateway Architecture

The full request path. Kong applies API policies and extracts user identity. Apollo Router plans and executes the federated query. Subgraphs receive both the query and the user context.

Why Two Gateways?

A single gateway could theoretically handle everything. But collapsing API concerns and GraphQL concerns into one layer creates a maintenance problem. Kong knows nothing about GraphQL — it treats /graphql as an opaque HTTP endpoint, and this is a feature. Kong's rate limiting, CORS, and auth plugins work identically whether the backend is GraphQL, REST, or gRPC. Meanwhile, Apollo Router knows nothing about rate limiting. It focuses entirely on understanding the supergraph schema, building efficient query plans, and propagating context. Adding rate limiting or credential validation to the Router would couple GraphQL-specific logic with API-general concerns.

Separation lets each gateway evolve independently. Kong can be replaced with any API gateway (Envoy, Traefik, AWS API Gateway). The Router can be updated to newer Apollo versions without touching authentication logic.

Kong: Declarative API Configuration

Kong runs in DB-less mode with a declarative YAML configuration. Every route, plugin, and upstream is defined in a single file:

Route Separation

Two services are defined with different routing rules:

-

/graphqlroutes to Apollo Router. All federated queries flow through this path. Rate limited to 60 requests per minute — enough for a storefront but protective against abuse. -

/auth/*routes directly to the User Service, bypassing the Router entirely. Authentication endpoints are public (no credential check required) and more aggressively rate limited at 20 requests per minute to prevent brute-force attacks.

This split is important: login and registration requests don't need federation. They're simple REST calls to a single service. Routing them through the GraphQL layer would add unnecessary latency and complexity.

Authentication Flow

The authentication flow. Kong validates credentials and sets user context headers. The Router propagates those headers to every subgraph involved in the query plan.

When Kong validates an incoming request, it doesn't just check the signature and expiration. It extracts claims from the payload and sets them as request headers:

x-user-id— the authenticated user's UUIDx-user-role—customeroradminx-user-email— the user's email address

These headers travel through the Router to every subgraph. Each subgraph reads them for authorization decisions without needing to re-validate credentials — Kong already did that.

Apollo Router: Supergraph Composition

The Router's job begins with a composed supergraph schema. The rover supergraph compose CLI tool takes the four subgraph schemas and produces a single supergraph SDL:

The output is supergraph.graphql — a schema that includes every type, field, and directive from all subgraphs, annotated with metadata about which subgraph owns each field.

Router Configuration

Three configuration sections matter:

Header propagation ensures user context reaches every subgraph. Without this, subgraphs would receive anonymous requests and couldn't enforce authorization.

CORS is configured at the Router level as a fallback. Kong handles CORS for external traffic, but the Router needs its own CORS config for development scenarios where it's accessed directly.

Telemetry exports OpenTelemetry traces to the collector. The Router creates a root span for every query and child spans for each subgraph fetch, providing automatic distributed tracing without any application code.

Query Planning in Action

The Router's query planner is where federation's intelligence lives. Given a query that spans multiple subgraphs, the planner determines the minimum number of subgraph requests needed, identifies which can run in parallel, and sequences dependent fetches.

Example: Cross-Service Product Query

This single query touches three subgraphs. The Router's query plan:

The query plan for a cross-service product detail query. Step 1 fetches the core product. Step 2 resolves extensions from Inventory and User Service in parallel. The merge step combines all results.

Step 1 is sequential — the Router must know the product's id before it can resolve extensions. The Router sends a single GraphQL request to the Product Catalog:

Step 2 runs in parallel. The Router sends entity resolution requests to both Inventory and User Service simultaneously:

The merge combines all three responses into the structure the client requested. Total latency: Step 1 + max(Step 2a, Step 2b), not the sum of all three fetches.

Batch Entity Resolution

For list queries, the Router batches entity resolution. If a products query returns 20 products, the Router sends a single _entities request with all 20 representations to each extending subgraph.

One request to the Inventory subgraph resolves all 20 products' inventory. This is why GetInventoryBatch exists in the gRPC API — the Inventory subgraph's entity resolver calls the same batch database query regardless of whether the request came from the Router or from the Product Catalog's gRPC client.

Request Tracing Through Both Gateways

A complete request trace shows the full path:

Without federation, the same data would require the client to make three separate API calls (products, inventory, reviews) and merge the results in the browser. With federation, the Router handles the orchestration, the client gets one response, and the total latency is bounded by the slowest subgraph.

Looking Forward

The gateway layer handles routing, authentication, and query orchestration. But when something goes wrong — a slow subgraph, a failed database query, a timeout — you need visibility across the entire request path.

In Part 5, we'll examine how OpenTelemetry instrumentation across three language runtimes produces distributed traces that span from Kong through the Router to every subgraph, backed by the Grafana LGTM+ stack — Tempo for traces, Prometheus for metrics and SLO tracking, Loki for logs, Pyroscope for profiling, and Alloy for log collection — with spanmetrics connectors, tail sampling, and cross-signal correlations.

This article is part of the Polyglot GraphQL Federation series. Continue to Part 5: Observability Across the Polyglot Stack for distributed tracing, metrics, and logging.